As an avid Pinterest user, I’ve noticed that its content tends to lack context or credibility. Users often leave comments with questions that go unanswered.

This is an issue because users might not get the most out of their experience. Without context, they can’t deepen their understanding of what they are seeing and may miss out on similar content that they would enjoy.

Sole designer, independent project

Figma, Figjam, Maze

My research goals were to learn about 1) how people use Pinterest generally, and 2) their experiences looking for context about pins.

The study was structured as:

5 Pinterest users

Moderated, remote

15-30 minutes

After completing five interviews, I arranged the content of my conversations into an affinity map. The map revealed a lot about how users interact with Pinterest in their daily lives.

Unsurprisingly, all 5 participants said they use Pinterest for visual inspiration and/or as a tool to organize content. Other findings included:

When it came to the central problem I was exploring, I arrived at two key takeaways:

5/5 users clicked the source link to learn more about a pin, but 3/5 expected the experience to be unhelpful or annoying.

Overall, users said they search for more information about a pin occasionally, rather than often. But I wondered how this might change if the information was easy to find.

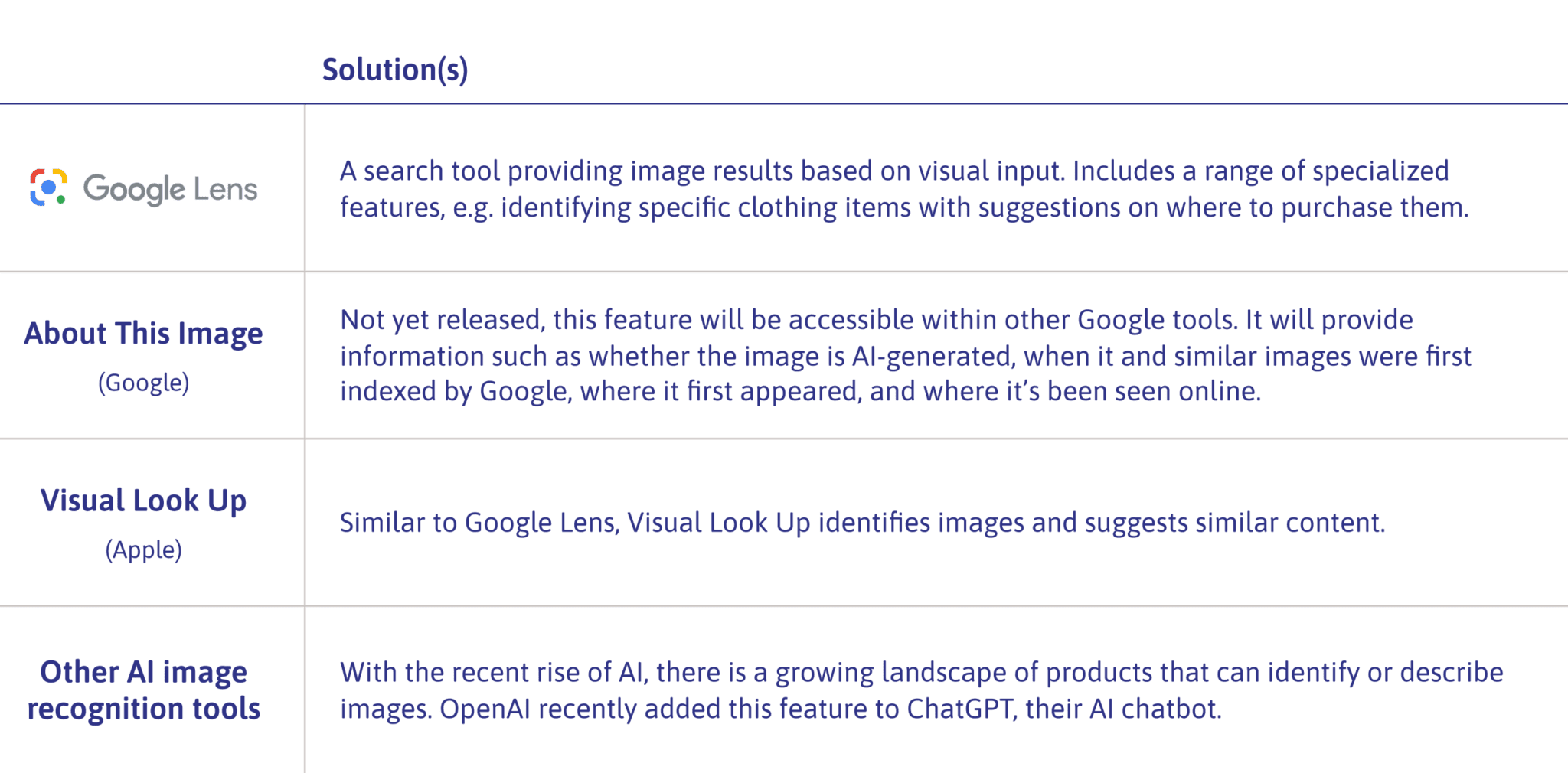

5/5 users would consider looking up a pin using Google Lens or text search. Like the source link, this takes the user to an external site, which is undesirable from a business perspective.

Only 1/5 users tried using Pinterest’s built-in visual search, suggesting that they either don’t know about the tool, or don’t expect it to be helpful.

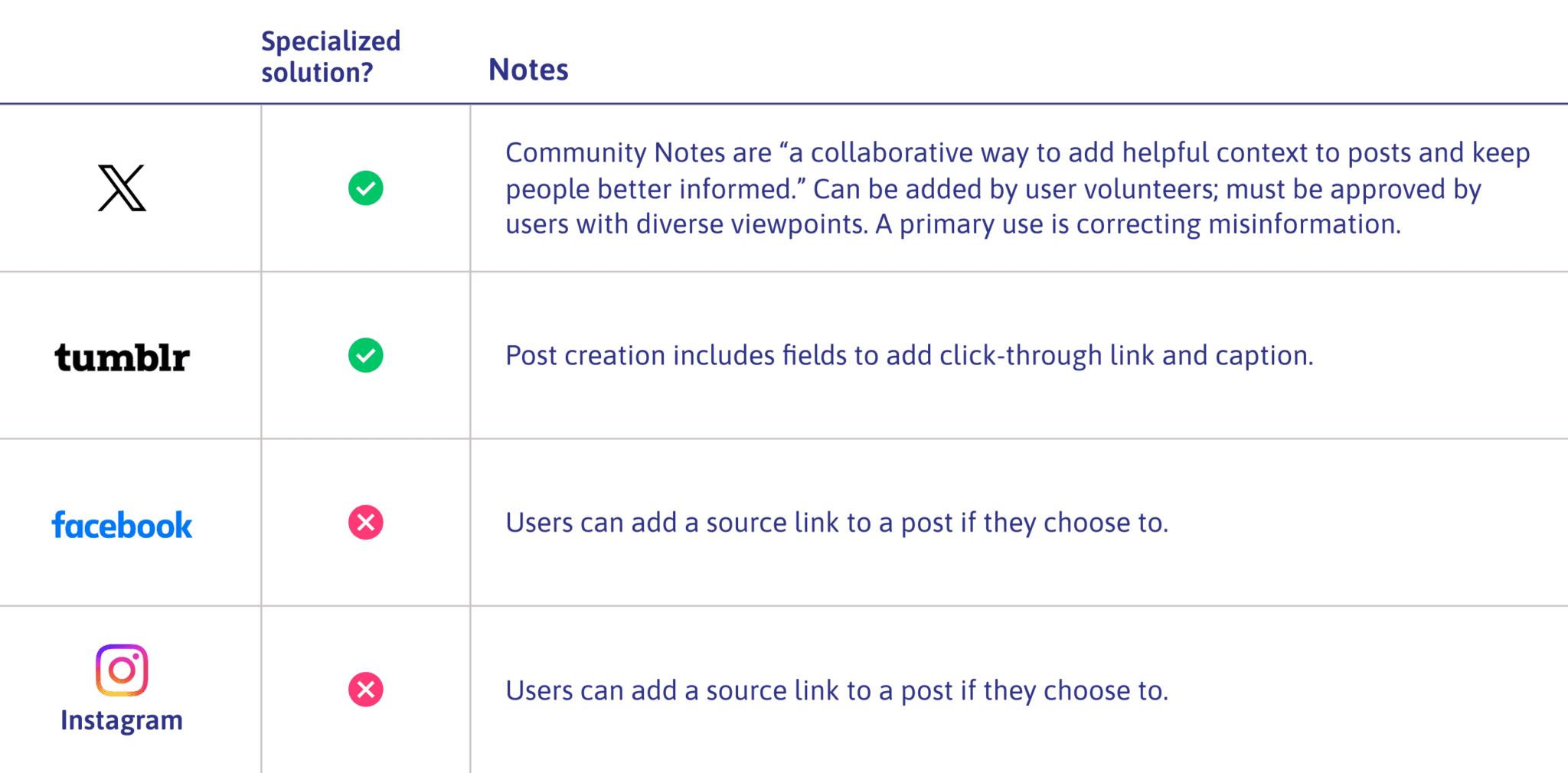

Now that I understood the problem I would be solving, I needed to learn about how existing products tackle similar issues. I found that competitors fell into two categories.

Aside from X’s user-driven Community Notes, other social media platforms didn’t offer many solutions to identifying or explaining content.

Meanwhile, I found a range of rapidly developing products using AI to identify or explain images.

I anticipated exploring the realms of user input and AI image recognition further when I reached the idea generation stage.

Two point of view (POV) statements embody the main issues uncovered in my user interviews. Corresponding “how might we” questions would guide my thinking about potential solutions.

Users seeking information about a pin often find the experience difficult, give up unsatisfied, or don’t try due to low expectations of the outcome.

…help Pinterest users more easily find the information they seek about a pin?

Users seeking information about a pin often leave the Pinterest site to visit a source link or use a tool such as Google Lens. This disrupts the continuity of the user experience.

…help Pinterest users find the information they seek without having to leave the Pinterest site?

At this point, I reflected back on my work so far. To come up with targeted solutions, I would need to consider:

I began by generating as many ideas as I could on sticky notes in FigJam. Next, I assessed them in three stages, ending with a set of three concepts that I planned to incorporate into the new feature:

I referred back to my user research to flesh out the details of the proposed feature.

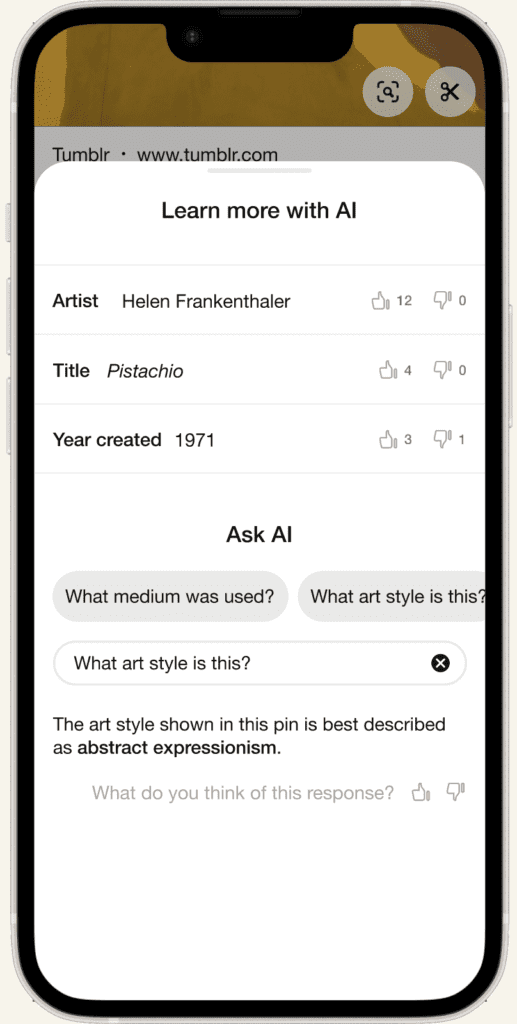

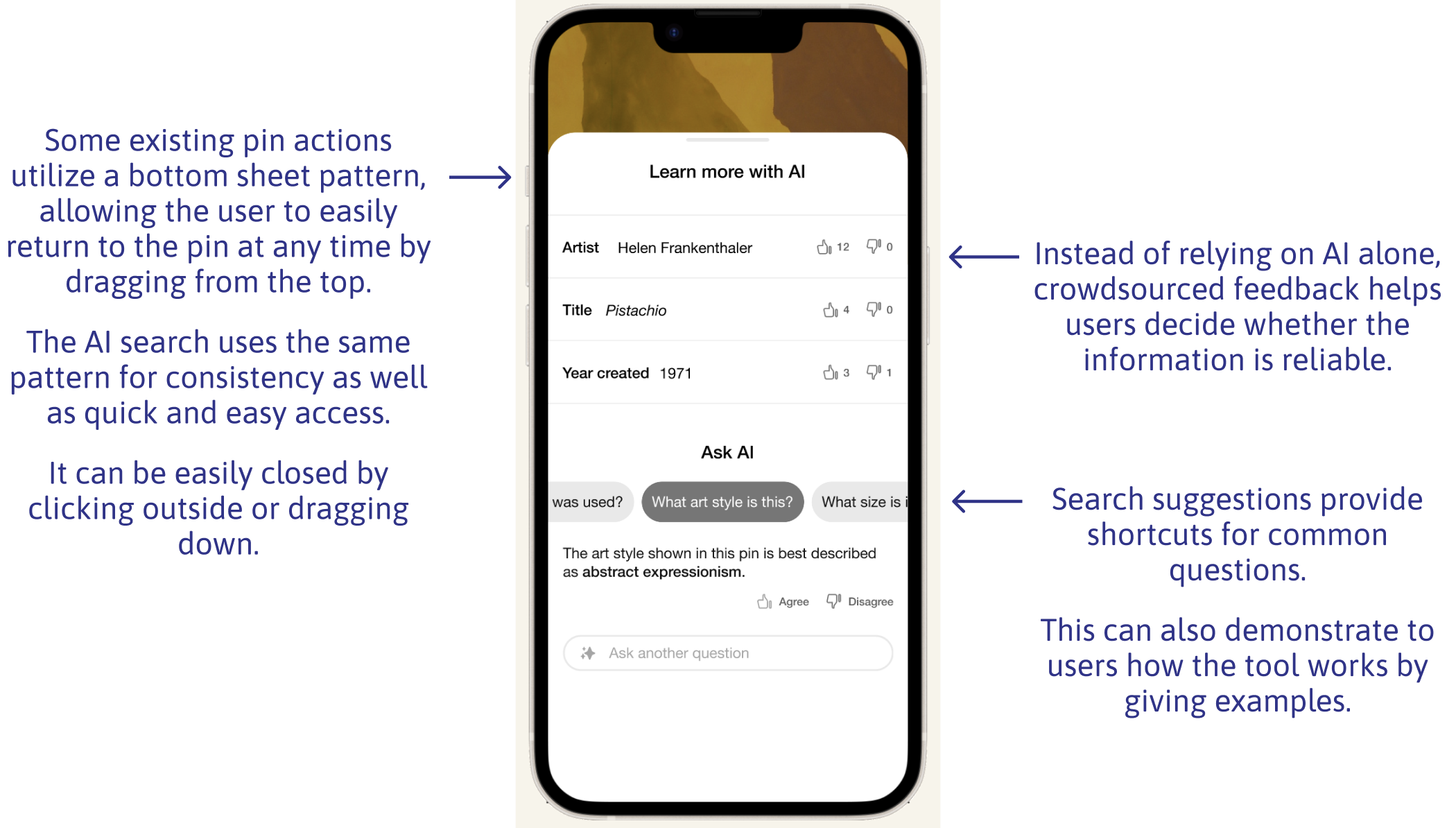

Users can learn more about pins from an AI search from within the (existing) Pinterest Lens feature. Results can be generated by asking questions, eg. “where is this?”

This eliminates the need to spend time digging through source links or using reverse image search, common frustrations in my user research. It also keeps them from having to leave the Pinterest site.

Users can see answers to previous users’ AI searches and agree or disagree. This provides a human check on potentially fallible AI answers. It also offers an outlet for feedback that may previously have landed in unhelpful comment threads.

A brief description is auto-generated for pins that have been identified via AI image search and reach a certain upvote threshold. This replaces source link excerpts, which users reported to be frequently confusing and irrelevant.

When users click a source link on a pin, a preview will open in an overlay, with relevant information highlighted. This keeps users from having to leave the Pinterest site/app and reduces the effort involved in locating relevant information.

Creating user and task flows for the feature helped me think through the user’s path in depth.

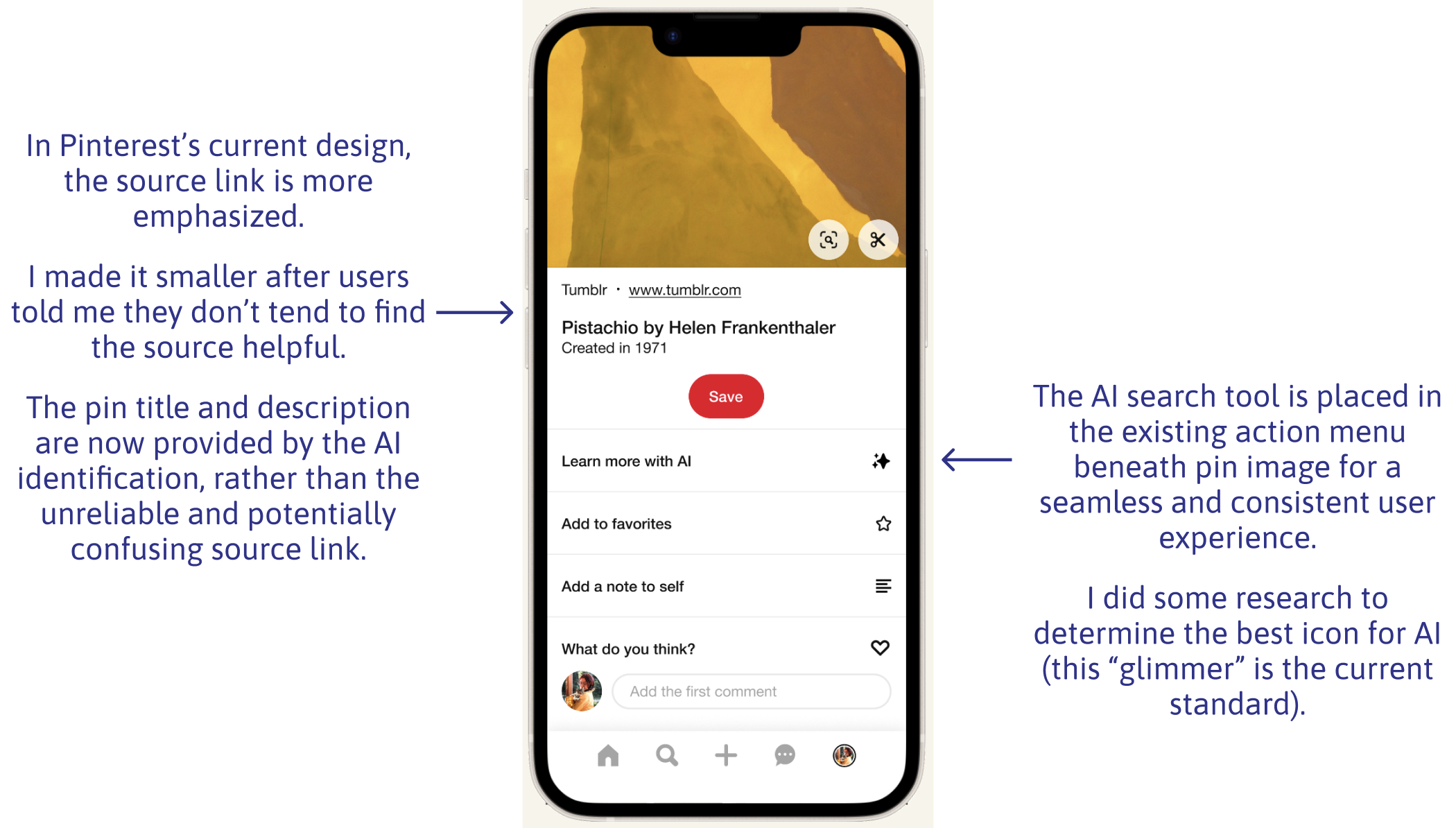

I originally planned to include an embedded source link preview in my design, but I realized that it would fall into a different task flow. I decided to take a more focused approach and pursue a single unified feature—the AI search tool.

Having defined the layout and interaction patterns for the feature, I refined the details of my wireframes and brought them together them in a working prototype.

At this stage, I reworked the text and iconography to more clearly label the tool as AI, so users would have an appropriate mental model when using it.

All of my UI decisions were guided by Pinterest’s real-life design system, called Gestalt. This included design patterns, colors, typography, and iconography.

My goal was to find out if my solution would address the issues I had surfaced in user interviews. I designed a study comprising:

I wanted to find out if the new feature would be clear to users, if they would find it useful, and how they would feel when using it.

16 Pinterest users

Unmoderated, online

Figma prototype, Maze

Exploring the results with affinity mapping, I was happy to find that the task was fairly easy overall (rated 4.5/5) and that users saw potential utility in the feature (also rated 4.5/5).

Two issues surfaced:

1. Where to start?

3/16 testers initially clicked something other than the “Learn More with AI” button. This was not unexpected, because the prompt given had been open-ended.

2. Can I trust this tool?

3/16 testers expressed concern about the reliability of AI-based tools. Their questions echoed some of the public sentiment about AI, so I knew this was an issue that needed to be addressed.

“What is the AI generated answer based on? How can I check that it’s true?”

– Usability test participant

The risk of misinformation with AI is real, so it would be unrealistic to promise accurate responses. Instead, I chose to acknowledge users’ legitimate concerns by:

1. Adding a disclaimer

Rather than asking for users’ feedback in terms of agreement, I added the more open-ended prompt, “What do you think of this response?” This encourages users to feel comfortable providing feedback even if they don’t know for sure whether the information is correct.

2. Adjusting language around feedback

This text appears when users first open the AI search tool, explaining that information provided may not be accurate. There is also a link to learn more.

My updated prototype incorporates the new disclaimer and adjustment to the feedback language.

There are several next steps I might pursue as part of a team working toward the release of this feature. These include:

I expected that testing might uncover an aspect of the design causing confusion or difficulty. In reality, the biggest issue was around trust of AI tools. I could have addressed this more proactively if I had broadened my understanding of what usability can mean.

While I was in the middle of this project, ChatGPT released a new image description feature which hadn’t been available a week before, when I was first researching AI solutions. A lesson in the importance of staying informed about a changing landscape.

This was my first time working from a design system. It provided helpful structure and reduced the time I spent on UI decisions. On the other hand, I learned to consult the design system and not make assumptions.